Every few months, the internet rediscovers AI and treats it like a magic button. Type a topic, hit enter, and expect a perfect article, research report, or strategy note in seconds. At first glance, this feels efficient. However, most founders quickly realise that generic prompts produce generic thinking. The output may look polished, but beneath the surface it is often shallow, repetitive, and occasionally inaccurate.

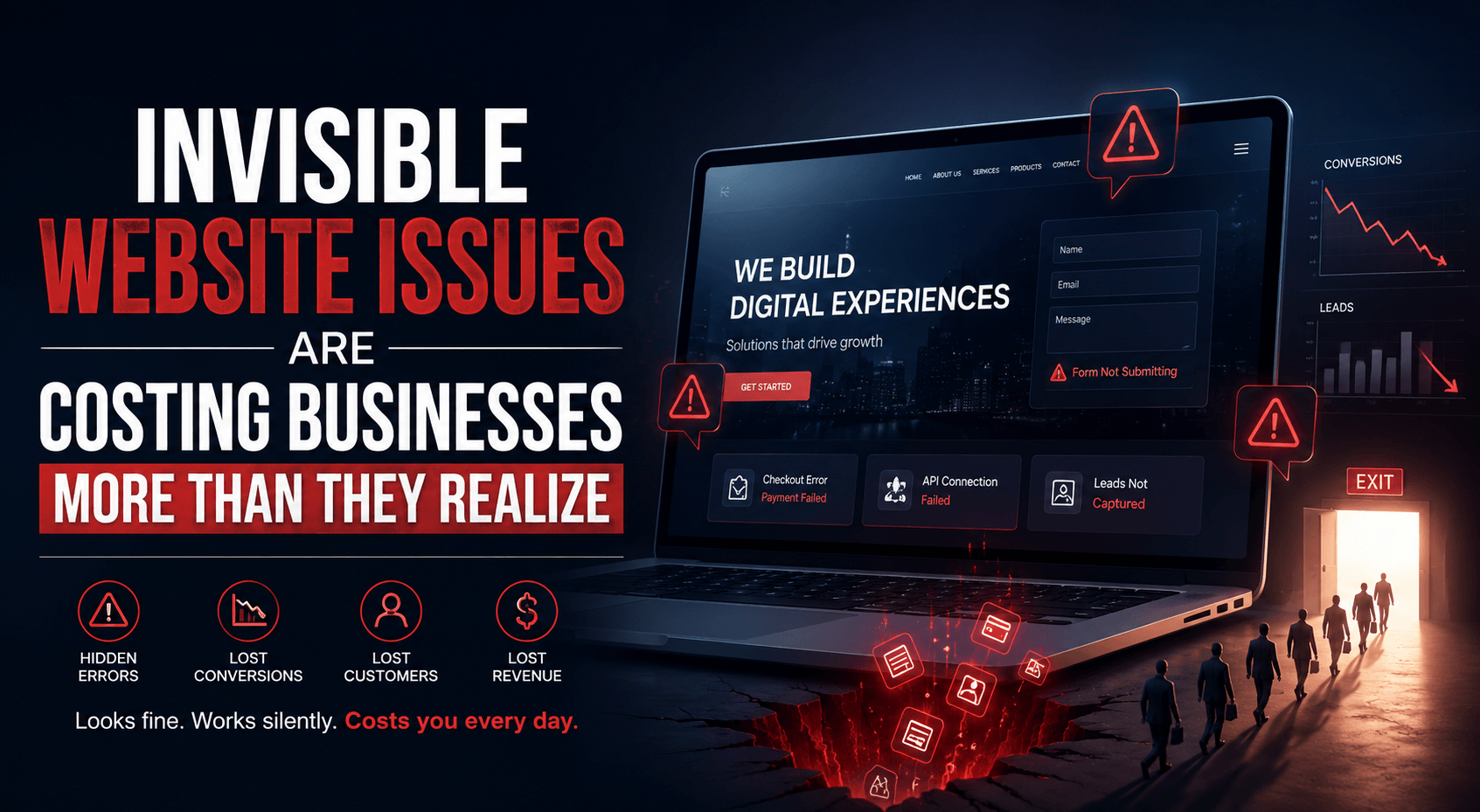

Well, the real issue is not the model but how we use it. When someone types “write me an article on this topic,” the system does exactly what it is asked to do. It generates a clean summary based on patterns. There is no structured research, no layered thinking, and no verification. As a result, even though your content looks presentable yet it lacks depth and reliability.

The Game Changer: From a writer to a researcher

This is where a structured Chain of Thought prompting quietly changes the entire game. Basically, you have to understand that instead of treating AI like a writer, the idea is to turn it into a research assistant that works step by step. The shift is small but a very powerful one. You are no longer asking for finished content immediately. You are asking the system to think, gather, analyse, and organise information before writing anything. That single change transforms the quality of output.

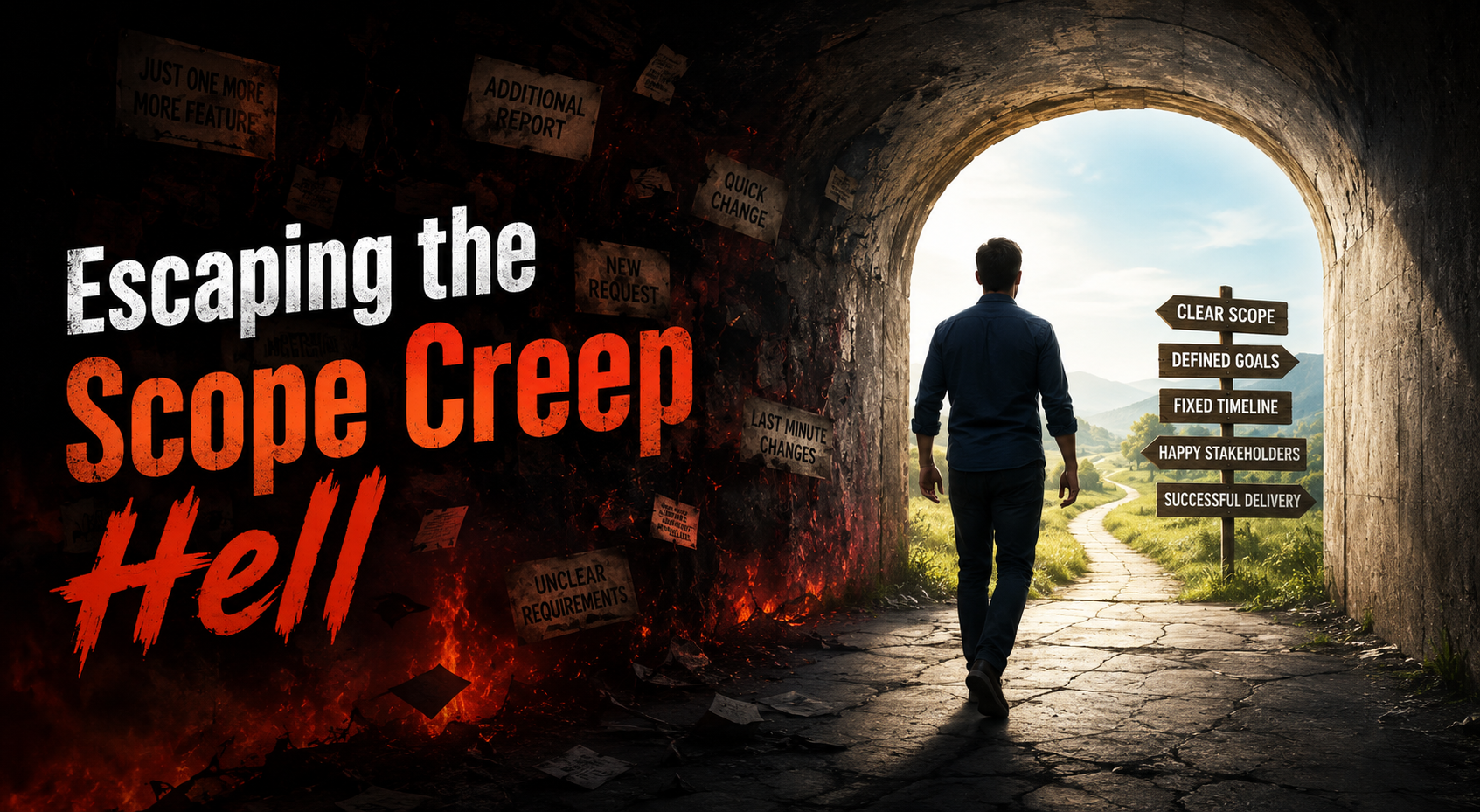

The process – Identify > Extract > Analyse > Create

The process usually begins with identifying credible sources. This step forces the system to ground its response in real material such as research papers, industry reports, earnings calls, or verified data. Therefore, the output becomes traceable and far more reliable. Hallucinations reduce naturally because the model is anchored to structured references instead of guessing from patterns. Once sources are identified, the next step is extracting core insights. This is where the system behaves like a disciplined analyst rather than a content generator. It separates meaningful information from noise and focuses on key claims, important statistics, and relevant findings. The result is a sharper and more focused research base that can actually support decision making.

The real power, however, appears in the analysis stage. When the AI is asked to study trends, contradictions, and patterns, it begins to connect the dots. Instead of repeating information, it starts explaining what the data means and why it matters. Therefore, the output becomes more thoughtful and strategic. It feels less like a blog and more like a structured research briefing that a founder can actually use.

Structuring the final findings into bullet points makes the entire output practical and easy to consume. Bullet points reduce unnecessary fluff and allow the information to be reused in multiple formats. Whether it is a presentation, internal memo, newsletter, or strategy document, structured insights travel better than long paragraphs. This also makes iteration easier because specific sections can be expanded or refined without rebuilding everything from scratch.

The new junior research analyst in the house

The cumulative effect of this structured approach is surprisingly strong. AI stops behaving like a writer and starts behaving like a junior research analyst. Founders remain in control of the vision and direction, while the system handles the heavy lifting of gathering and organising information. This balance is where real leverage begins to appear.

In real world scenarios, this structured prompting pattern consistently improves research speed and clarity. Teams move faster because the system delivers organised insights instead of scattered paragraphs. Decision making becomes easier because the output highlights trends, risks, and opportunities clearly. Most importantly, the research feels usable rather than decorative.

To make this approach even stronger, a few practical enhancements can be added. Instead of just collecting sources, the system can rank them by credibility and recency. Instead of summarising claims, it can cross verify them across multiple references. Instead of presenting conclusions, it can highlight uncertainties and potential biases. These small refinements make the research more balanced and trustworthy.

A refined version of this structured CoT workflow typically looks like this:

- Identify credible and recent sources and rank them by reliability

- Extract key insights and important data points from each source

- Analyse trends, contradictions, and second order effects

- Cross verify claims across multiple independent references

- Synthesize findings into structured and scannable bullet points

- Highlight implications for the specific industry or business

This structure turns AI into a practical research engine rather than a passive writing tool. It also allows founders to add constraints such as focusing on recent data, specific markets, or targeted company segments, which makes the output even more precise and relevant.

Thus the bigger lesson to be learnt here is simple but important. AI works best when it is treated like a system, not a shortcut. The people who get the most value are not the ones asking for quick articles. They are the ones building structured workflows that guide the system step by step. They understand that vision and judgment come from humans, while structured analysis and data processing can be delegated.

This process seems to be a promising one and is here to create a powerful advantage in the long run. Founders can focus on strategy, decision making, and execution while the system handles research, organisation, and preliminary analysis. So, the bottom line is this is not about replacing human thinking; it is about amplifying it.

Structured Chain of Thought prompting is therefore not just another AI trick. It is a practical framework for turning AI into a reliable research partner. The moment you stop asking it to write and start asking it to think, the quality of output changes dramatically. And that is where real leverage begins. Learn more about the Structured Chain of Thought, watch: